Instagram to Alert Parents When Teens Search for Self-Harm and Suicide Content

Instagram has announced a new safety feature that will alert parents if their teenage children repeatedly search for suicide or self-harm related content on the platform. The move is part of a broader effort by parent company Meta to strengthen online protections for young users amid rising global concern over child safety on social media.

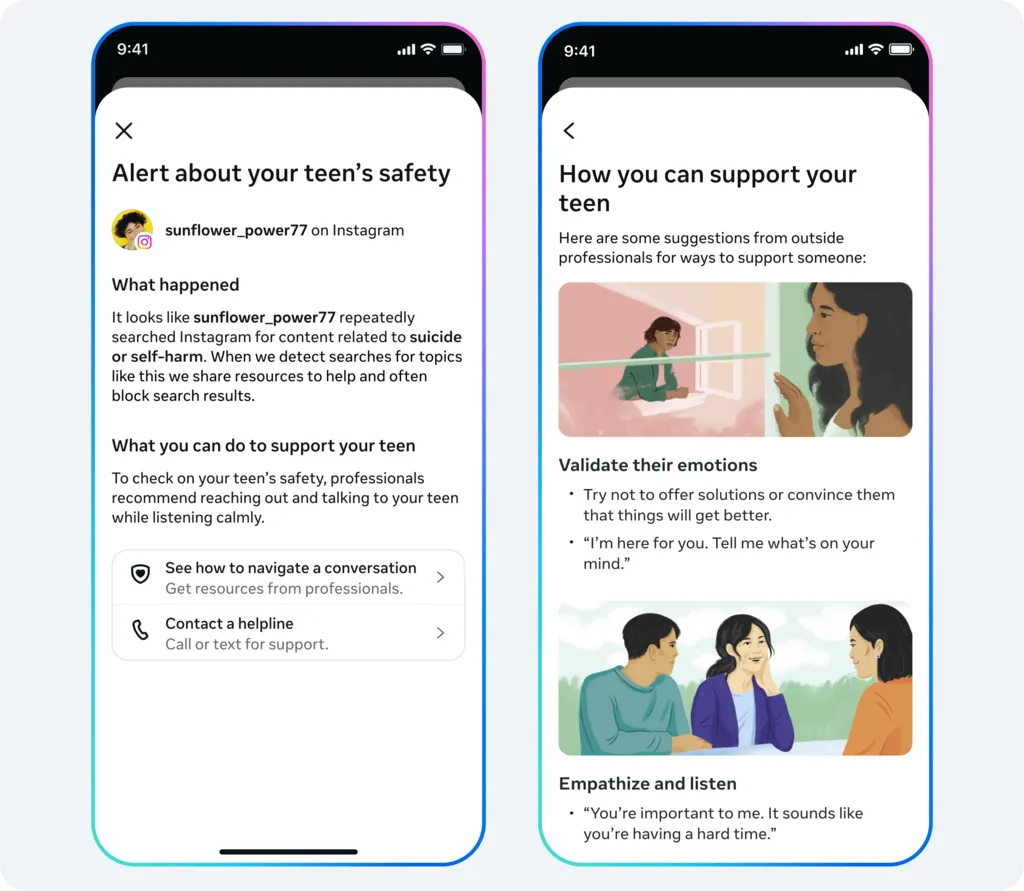

Under the new system, parents using Instagram’s supervision tools will receive notifications when their teen frequently searches for terms associated with self-harm or suicide. These alerts will be accompanied by expert resources aimed at helping families manage sensitive conversations and seek professional support where needed.

This is the first time Instagram will proactively inform parents about concerning search patterns, rather than simply blocking harmful material and directing teens toward external help services. Meta says the initiative is designed to provide early warnings of potential emotional distress and encourage timely intervention.

However, the announcement has sparked mixed reactions among child safety advocates and mental health charities, with some warning that such alerts could unintentionally cause panic and emotional distress for families.

Concerns from Child Safety Campaigners

Andy Burrows, chief executive of the Molly Rose Foundation, said the measure risks doing more harm than good if not handled carefully.

“This clumsy announcement is fraught with risk,” Burrows said, adding that sudden alerts may leave parents anxious and ill-prepared to have difficult conversations. The foundation was established by the family of Molly Russell, who died by suicide in 2017 at the age of 14 after being exposed to harmful content online.

Molly’s father, Ian Russell, also expressed serious concerns, questioning how parents might react to receiving such alarming notifications. Speaking to the BBC, he said: “Imagine being at work and getting a message saying your child may be thinking of ending their life. That level of panic could overwhelm many parents.”

Russell added that even with expert resources, the emotional shock of such alerts could make it difficult for families to respond calmly and constructively.

Balancing Safety and Emotional Impact

Meta has defended the move, saying alerts will only be sent if concerning search behavior occurs repeatedly within a short timeframe. The company emphasized that the system will “err on the side of caution” and that some notifications may occur even when there is no serious risk.

According to Meta, alerts will be delivered through email, text messages, WhatsApp, or directly within the Instagram app, depending on the family’s registered contact details. The feature will initially roll out in the UK, United States, Australia, and Canada, with global expansion planned later.

Ged Flynn, chief executive of suicide prevention charity Papyrus, welcomed greater parental awareness but argued that the real issue lies deeper.

“Children and young people are being pulled into dangerous online spaces by powerful recommendation systems,” Flynn said. “Parents don’t want alerts after exposure—they want harmful content removed before it ever reaches their children.”

Similarly, Leanda Barrington-Leach, executive director of children’s rights group 5Rights, stressed the need for platforms to be designed with child safety as a default. “If Meta is serious about protecting children, it must redesign its systems to be age-appropriate from the start,” she said.

Growing Global Pressure on Social Media Platforms

The announcement comes as governments worldwide tighten regulations around child online safety. Australia recently banned social media use for children under 16, while countries such as Spain, France, and the UK are considering similar policies.

Social media companies, including Meta, face mounting scrutiny over how their platforms affect young users’ mental health. Regulators argue that algorithms often push emotionally vulnerable teens toward harmful material, intensifying psychological risks.

Earlier research by the Molly Rose Foundation suggested that Instagram continued to recommend distressing content related to depression and self-harm to vulnerable teenagers. Meta disputed the findings, stating they misrepresented the company’s safety efforts.

Meanwhile, Meta CEO Mark Zuckerberg and Instagram head Adam Mosseri recently testified in US court, defending their company against claims that its products deliberately target younger audiences.

Expanding Safety Measures

Instagram says it plans to expand the alert system in the coming months, including monitoring interactions with its AI chatbot. The company believes teens increasingly turn to artificial intelligence for emotional support, and concerning conversations may indicate deeper struggles.

Cyberbullying researcher Sameer Hinduja said that while alerts can be frightening, they could also offer a crucial opportunity for early support. “What matters most is the quality of guidance parents receive immediately after the alert,” he said. “Parents should not be left alone to figure out what to do next.”

The Debate Continues

While Meta insists its new alert system prioritizes teen safety, critics argue that responsibility should not be shifted onto families alone. Instead, they call for stronger preventive measures to ensure harmful content is not promoted by algorithms in the first place.

As social media platforms face intensifying legal, political, and public scrutiny, Instagram’s latest move highlights the complex challenge of protecting young users while balancing privacy, mental health, and parental involvement. Whether these alerts will help or harm remains a subject of intense debate.

Source : BBC